SERVICES

Technical oversight services

Start with a fixed-scope check when you need a clear decision. Keep me involved as Technical Operating Partner when the technical risk is ongoing.

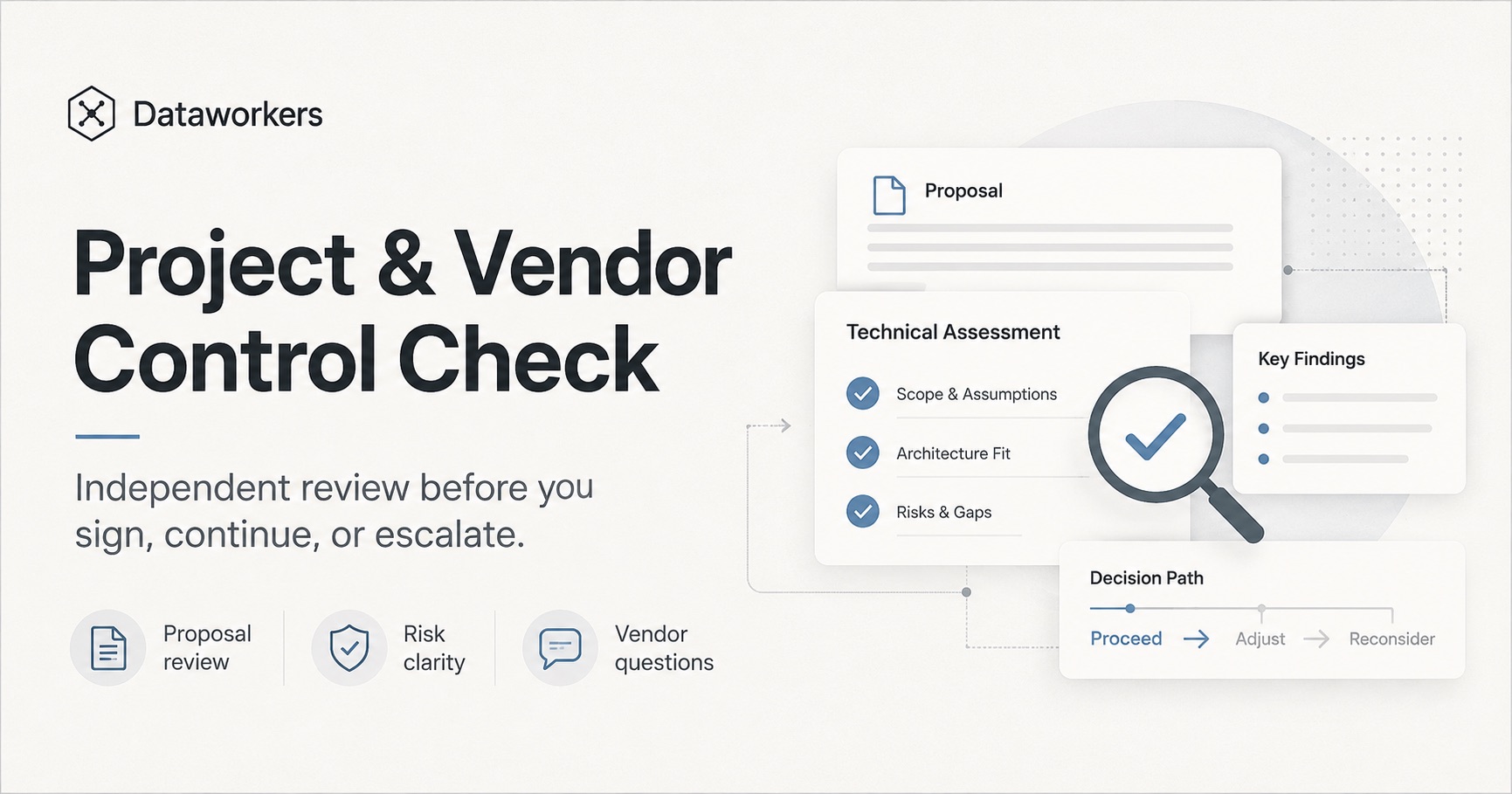

Project & Vendor Control Check

An independent review of a software proposal, estimate, project plan, or technical direction before you sign, continue, or escalate.

Starting point

From €750

Prices exclude VAT.

Who it's for

- Owners or managers about to sign a software vendor proposal

- Companies already in a project that feels slow, unclear, or expensive

- Teams that need an independent read on an estimate, scope, architecture, or delivery plan

- Founders who want a second opinion before scaling a prototype

Problems I solve

- The vendor proposal is hard to evaluate

- The project is dragging and nobody can explain the real risk

- You do not know whether the estimate, architecture, or delivery plan makes sense

- The company is becoming dependent on one supplier or one person

- AI or automation claims sound attractive, but the practical value is unclear

What I do

- 1Review the proposal, estimate, scope, architecture assumptions, and project plan

- 2Identify missing decisions, lock-in risk, delivery risk, and acceptance criteria gaps

- 3Check AI or automation claims only where they affect cost, quality, or ownership

- 4Write a short memo with what looks reasonable, what is risky, and what to ask next

What I need (typical)

- Vendor proposal, estimate, project plan, or relevant technical notes

- Current business goal and project context

- One call with the owner, manager, or founder responsible for the decision

- Architecture notes, screenshots, or access only when needed for the scope

What you get

- 2-4 page written memo

- What looks reasonable

- What looks risky

- Missing decisions and acceptance criteria gaps

- Questions to ask the vendor before continuing

- Recommendation: sign, clarify, renegotiate, stop, or review deeper

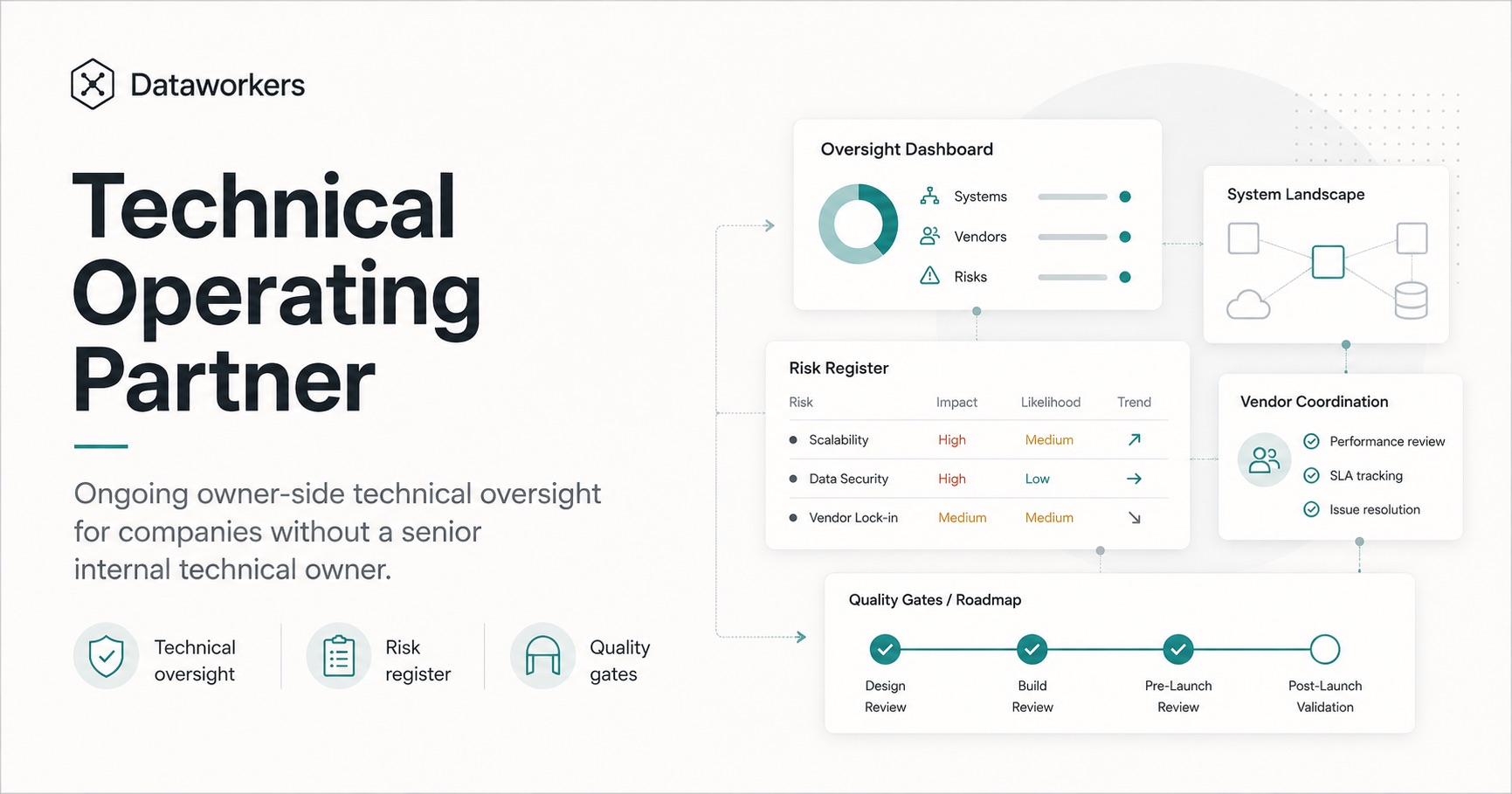

Technical Operating Partner

Ongoing owner-side technical oversight for companies without a senior internal technical owner.

I represent your side technically, so management is not dependent only on what vendors or developers say.

Controlled automation is part of the work only where it makes sense: after the workflow, owner, inputs, quality criteria, and failure cases are clear.

Starting point

From €2,500/month

Prices exclude VAT.

Who it's for

- Owners and CEOs without senior internal technical leadership

- Companies dependent on external software vendors

- Firms with custom software, internal tools, CRM/ERP integrations, portals, dashboards, or automation

- Teams where developers exist, but no one owns architecture, delivery discipline, or long-term technical risk

Problems I solve

- Vendor proposals and technical decisions are hard to evaluate

- Software projects keep slipping

- Developers optimize local tasks, but no one owns the whole system

- The company is accumulating hidden technical debt

What I do

- 1Represent your side technically in key vendor and project conversations

- 2Review proposals, estimates, roadmap choices, and architecture decisions

- 3Maintain a simple risk register and decision log

- 4Define acceptance criteria and quality gates

- 5Translate technical trade-offs into business consequences

What I need (typical)

- Access to vendor proposals, project plans, roadmap, and relevant technical artifacts

- A management contact who can make or escalate decisions

- Regular project or vendor touchpoints

- Enough visibility to compare technical claims with delivery reality

What you get

- Monthly technical risk register

- Decision log with key technical trade-offs

- Vendor/proposal review notes

- Meeting notes and next technical actions

- Acceptance criteria and quality gates for active work

- Roadmap and estimate sanity checks

- Monthly management summary: what is risky, what is reasonable, what to decide next

Prototype Readiness Review

A technical review for AI-built, no-code, low-code, or fast-developed apps before real users depend on them. The demo works; I check whether it can safely become a product.

Starting point

From €900

Prices exclude VAT.

Who it's for

- Founders who built an MVP with AI coding tools, no-code, or low-code platforms

- Small teams shipping a fast-built internal or customer-facing app

- Companies that built a demo or research tool and now want to use it operationally

- Non-technical founders who need a technical expert on their side

Problems I solve

- A demo can work and still be unsafe as a product

- The demo works, but nobody knows if the architecture is maintainable

- The app was built fast, but security and data handling were not reviewed

- AI-generated code was accepted without proper understanding

- The product depends on fragile prompts, hidden assumptions, or unstable integrations

- The team does not know whether to continue, stabilize, refactor, or rebuild

What I do

- 1Review architecture, maintainability, database structure, and API design

- 2Check security, data exposure, authentication, and access control

- 3Assess deployment setup, error handling, monitoring, and testability

- 4Look for vendor/platform lock-in and AI-generated code risk

- 5Review user experience and customer-perceived quality

What I need (typical)

- Repository or app access where relevant

- A short product walkthrough

- Deployment details and current hosting setup

- Known risks, customer commitments, and next launch milestone

What you get

- Short technical risk memo

- Production-readiness checklist

- Blocking risks ranked by severity

- Security, data, deployment, and maintainability notes

- Recommendation: continue, stabilize, refactor, or rebuild

- 30-60 day stabilization plan

Need someone technical on your side before software gets expensive?

Bring me the proposal, project, or prototype. I'll tell you what looks reasonable, what is risky, and what to decide next.

I reply within 1 business day.